The impact of Artificial Intelligence on organizations' financial sectors has drawn profound interest in recent years. AI-related ethical considerations and governance in organizations, especially in the financial sector, involve scrutinizing AI algorithms for bias, owing to emerging regulatory standards, and addressing privacy issues. The paper aims to examine AI-related ethical considerations and governance in select countries through an exploratory approach, studying recent research in the domain. This working paper examines and analyzes the multidimensional facets of AI and the risks that should be assessed and mitigated in organizations, focusing on country-specific guidelines. A model with an appropriate rationale has been developed to address the gaps in AI ethical considerations and governance, complementing the prevailing shortcomings of AI systems and processes in the financial sectors of select countries. Furthermore, it was found that some countries had stringent AI considerations and guidelines, while in some countries, the guidelines were comparatively loose. To cover the inconsistencies, a model covering the relevant dimensions required has been framed, which can address the current loopholes in the countries studied or to be studied using this framework.

Keywords: Artificial Intelligence, financial sector, ethical considerations, UAE, India

I. INTRODUCTION

Artificial Intelligence (AI) has gained significant momentum in digital technology usage worldwide over the past few years. Numerous applications of AI have been found in various areas, including predictive analytics and identification, which have been widely adopted in diverse contexts such as education, recruitment, training, marketing, governance, finance, and security [1].

With AI's increasing usage and implementation, challenges and risks have arisen, along with inevitable questions about how AI systems should conform to ethical standards. These issues have triggered a global initiative and narrative on AI ethics. Governments and policymakers from different countries have tried to develop and propose AI-related guidelines. Recent research has highlighted the fragmented discussion of AI governance dimensions, or it may not have encompassed the entire gamut of governance issues. It is essential to recognize that, as technology has limitations, governments must collaborate on devising a globally accepted mechanism to strategize and coordinate sustainable AI ethics and governance [2]. AI has had, and will consistently have, a significant impact on global society; therefore, there is a critical need for effective regulations and policies to govern AI usage [3]. To emphasize, AI researchers agree that identifying the appropriate use of AI is a priority [4]. Discerning and evaluating AI systems' transparency, fairness, and accountability is imperative to stimulate user confidence in the AI mechanism [5].

The research has two objectives:

- To examine the AI ethics and governance guidelines related to the financial sector in select nations

- Develop a viable AI ethics and governance model that complements the shortcomings of the current country-wise guidelines.

Our research complements the gaps in AI ethical guidelines and considerations. Uniformity and standard guidelines were prevalent in some countries, while some had generic guidelines. The contribution was crafting a framework highlighting and recommending the essential AI ethical guidelines and considerations concerning the financial sector.

II. METHODOLOGY

A. Procedure

Based on our objectives, we studied the AI ethics and governance guidelines of the chosen countries (selected based on rapid progress, financial activities, and diversity) through the AI guidelines. Furthermore, recently published research studies and white papers were analysed [1]; [5]; [6]; [2]; [7]; [3]; [4]; White & Case papers, [8]; [9]. Additionally, we extensively studied the country’s AI guidelines from the country's prescribed websites.

We examined the selected countries in our research. We highlighted their respective policies and regulatory guidelines, ultimately developing a proposed framework that can be referenced in framing AI ethics and governance. In a focused manner, we examined the prevailing laws and regulations related to AI. We explored how AI can be effectively governed by the business and government sectors, especially the key geopolitical powers. [6] Their systematic literature review outlined that AI governance solutions can provide valuable proposals for developing comprehensive AI governance systems and frameworks.

B. Countries Studied

United Arab Emirates

The United Arab Emirates has established an Artificial Intelligence Office that actively participates in international AI forums, aiming for transparency, accountability, and ethical compliance. It supports global AI governance, security, and development alliances while promoting responsible AI use to ensure safety, privacy, and international stability. The UAE's charter for developing and using artificial intelligence aims to make the UAE the leading nation in AI by 2031. The policy promotes a nurturing environment for advancing Artificial Intelligence, which has rapidly developed in recent years. The primary objective is to establish robust governance principles that foster global cooperation and drive innovation, promoting economic growth and enhancing the quality of life. The primary objective of public policy is to position the UAE as a global leader in AI while upholding its core values, namely Progress, Collaboration, Society, Ethics, Sustainability, and Safety. These contributions support the development of the UAE AI Charter, driving economic diversification and innovation, and ultimately enhancing the quality of life through AI while fostering international cooperation in this field. There are no laws or regulations regarding AI governance in the Finance Sector. However, rules exist to secure data, which have been amended to address AI-related developments [10]. In the UAE, the Central Bank of the UAE (CBUA), along with the Securities and Commodities Authority (SCA), the Dubai Financial Services Authority (DFSA) of the Dubai International Financial Centre, and the Financial Services Regulatory Authority (FSRA) of Abu Dhabi Global Market have charted out the guidelines for the Financial Institutions using the enabling technologies. The predominant principles are data protection, control functions, independent review, skills, knowledge, and expertise.

India

India’s approach to artificial intelligence regulation is sector-driven. The Responsible AI for India, released by the Ministry of Electronics and Information Technology (MeitY), outlines key principles emphasizing transparency, accountability, and fairness to address AI bias and discrimination. An advisory group, chaired by the principal scientific advisor, has been formed to develop an AI-specific regulatory framework for India. Under the guidance of the Advisory Group, the Subcommittee on AI Governance and Guidelines Development was established to provide actionable recommendations for AI governance in India. The Subcommittee examined key government issues, studied existing frameworks, conducted a gap analysis, and ultimately proposed an enhanced, comprehensive approach to ensure the reliability and accountability of AI systems [11]. The Securities and Exchange Board of India issued a circular in January 2019 on reporting requirements for AI and machine learning applications and systems offered and used in finance. This can be seen as a regulatory oversight until a comprehensive framework is adopted, as the circular merely enforces companies to disclose information on how the AI is trained and what data has been used. These do not provide a risk-free environment for AI in the finance sector, but enable potential users to access basic details about AI models and their training data. However, it does not legally restrict the use of developed AI systems using unverified or potentially biased data. The absence of sector-specific AI regulations leaves gaps in accountability, requiring a more comprehensive legislative approach [10]. India strives to balance fostering innovation and ensuring that AI benefits its people while mitigating the risks associated with the technology [7].

European Union

The European Union presented the Artificial Intelligence Act in 2024, which proposed a legal framework for regulating Artificial Intelligence within the European Union. The act aimed to develop Artificial Intelligence Systems that adhere to fundamental safety, rights, and ethical principles in the context of potential risks associated with Artificial Intelligence models, thereby generating trust in these models [12]. The AI Act classifies AI applications based on their risk levels: unacceptable risk, high risk, transparency risk, Minimal risk, or no risk. Market surveillance authorities observe, investigate, and enforce AI regulations. In contrast, Fundamental rights protection authorities have access to information, cooperation, and investigations into AI-related violations, ensuring protection of privacy and non-discrimination laws. While the primary objective of the Artificial Intelligence ACT is to establish AI regulatory frameworks and safeguard the privacy and ethical integrity of the financial sector, it also fosters innovation and development, as the AI ACT enables companies to develop and test general-purpose AI models before releasing them for public use. The companies have testing environments that simulate real-world conditions, allowing them to grow significantly in the AI sector and deliver high-quality, trustworthy AI models. National authorities provide such testing environments to companies to increase innovation and development [13].

The AI ACT categorizes AI systems based on risks and administers to each system depending on its level of risk, thereby enhancing security and ethical compatibility. Despite regulations, the EU promotes innovation safely by providing a development environment for companies' AI, which enables AI systems to undergo real-world simulations, enhancing quality while minimizing risk and boosting technological advancement. The AI Act encompasses fraud, embezzlement, credit scores, customer scores, investment optimisation/asset management decisions, and insurance underwriting systems.

China

China has adopted a stricter and more control-based approach to Artificial Intelligence regulations, aiming to control its development while appreciating its strategic advantages [9]. Some major requirements are:

- AI-driven financial services must adhere to the risk management guidelines.

- AI models must be fair, explainable, and transparent. This establishes the benchmark for evaluating AI algorithms to prevent bias and ensure ethical financial decision-making.

- Financial institutions providing AI services must disclose the work behind the AI decision-making, eliminating hidden biases in investment decisions.

- Some key compliance requirements for AI generation services include lawful use, data labelling rules, data training, content moderation, and reporting mechanisms.

Hong Kong

Hong Kong has adopted an open and flexible approach to regulating Artificial Intelligence. Hong Kong promotes technological advancements and economic growth while ensuring consumer protection, financial stability, and risk mitigation. The regulation of AI deployment in various sectors is handled through a blend of guidelines, industry standards, and legal frameworks, particularly in the financial sector. The Securities and Futures Ordinance in Hong Kong serves as the primary legal framework for regulating the securities and futures market in the territory. It is tasked with regulating AI applications that aid financial decisions, such as investment and trading, ensuring that the AI model operates without bias and in an ethical manner. The SFO ensures transparency, integrity, and stability, ensuring consumer protection and enhancing the reliability of these AI models. All risks and information must be disclosed to clients before any investment decisions are made, and the logic behind investment suggestions should be provided to help consumers understand the market and protect their financial interests [14]. Hong Kong has undergone rapid development in Artificial Intelligence applications within the financial sector, including models that perform AI-driven wealth management, credit scoring, and trading advisors. The government regulates and ensures these AI tools align with key factors, including fairness, transparency, and consumer protection. Some key principles applied in the AI-driven financial sector include model validation, auditability, vendor oversight, data protection, cybersecurity, and risk mitigation, as outlined by the Hong Kong Monetary Authority [15].

The predominant attributes that were identified after a detailed examination have been highlighted below, country-wise and with an emphasis on the implications for the financial sector:

TABLE I: COUNTRY-WISE AI GUIDELINES

| Country/Region |

Legal Framework / Strategy |

Key Focus Areas |

Financial Sector Relevance |

| China |

Personal Information Protection Law (PIPL), AI Guidelines, Cybersecurity Law |

- Strong accountability & penalties

- Privacy through legal basis & consent

- Data lifecycle governance

- Risk-based monitoring & enforcement

- Regulatory sandbox support

- Government-curated datasets |

- Heavily regulated financial AI

- Strict vendor oversight

- Requires model validation, fairness, and security

- PIPL applies to financial data handling |

| United Arab Emirates |

Public policy document issued by the Minister of State for Artificial Intelligence and Digital Economy and the Remote Work Applications Office |

- Strong governance principles promote global cooperation

- Drive innovation to enhance economic growth and quality of life |

- The Financial Free Zones consist of Dubai International Financial Centre (DIFC) and the Abu Dhabi Global Market (ADGM). No law or regulation governs the implementation of Artificial Intelligence in the Finance sector.

- Mainland UAE consists of the remainder outside the Financial Free Zones. While there are no laws or regulations for implementing AI in the Financial Sector, amendments have been made to existing data protection legislation that applies in the DIFC to capture AI-related developments, including a recent amendment to Article 10 of the DIFC Data Protection Regulations. |

| Hong Kong |

Data Privacy Ordinance, HKMA Fintech Reg, SFC guidelines |

- Vendor risk management

- Transparent AI deployment

- Compliance with KYC/AML

- Encouragement for innovation within limits |

- Focus on Fintech AI & RegTech

- Vendor audits & reviews are mandatory

- AI credit scoring and robo-advisory need clear oversight |

| United States |

NIST AI RMF, Executive Orders, FTC guidance |

- Sector-specific approach

- Innovation-driven

- Risk management & bias mitigation

- Algorithmic accountability |

- Regulated by sector (e.g., SEC, CFPB)

- Use of AI in fraud detection, trading, and credit scoring under scrutiny

- No federal AI law, but strong agency control |

| European Union |

EU AI Act (upcoming), GDPR |

- Risk-tiered AI classification

- Fundamental rights-based governance

- High-risk AI under strict regulation

- Emphasis on transparency, safety, and fairness |

- AI for credit scoring, insurance, and investment = high risk

- Mandatory risk assessment, audit logs

- GDPR compliance for data use |

| Singapore |

AI Model Governance Framework (voluntary), MAS sandbox |

- Explainability and accountability

- Encourages responsible innovation

- Human oversight of AI

- Testing under secure environments |

- MAS Regulatory Sandbox for Fintech

- Clear expectations for AI in finance

- Voluntary frameworks are adopted widely |

| Canada |

Directive on Automated Decision-Making, AIA tool |

- Algorithmic Impact Assessment

- Transparency and explainability

- Human-in-the-loop design

- Ethics & fairness embedded |

- Public AI use must go through AIA

- Push for fairness in automated credit and loan approvals

- Transparency is required in automated financial decisions |

| United Kingdom |

ICO, FCA regulations [16] |

- Pro-innovation principles

- Regulator-led enforcement

- Fairness, accountability, data protection

- Sector-specific guidelines |

- FCA explores AI in financial services

- Emphasis on fair outcomes and customer protection

- Encourages internal governance & audits |

| Japan |

Social Principles of AI [17] |

- Human-centric AI

- Safety, transparency, and trust

- Guidelines for development

- Cross-border data cooperation |

- Promotes AI in financial innovation

- Sector-based guidance with ethical oversight

- AI investment advisors and credit systems under ethical norms |

| South Korea |

AI Ethics Guidelines (voluntary), AI Basic Act (draft) |

- Transparency and accountability

- Inclusiveness and human rights

- Regulatory support for innovation

- Government R&D funding |

- AI used in banking and fintech encouraged

- Ethics guidelines promote non-discrimination in lending/credit

- Still developing stronger laws |

| India |

Responsible AI Strategy (NITI Aayog), DPDP Act 2023 |

- Fairness, inclusiveness, and privacy

- No central AI law yet

- Pilot testing and use-case regulation

- Focus on the public sector & governance |

- RBI and SEBI exploring AI for supervision

- Digital lending and credit scoring AI under regulatory radar

- Lack of unified law, but moving toward a stronger policy base |

The study unfolds the prevailing guidelines country-wise: China, as a country, can be benchmarked because of its guidelines. On the one hand, strict regulations oversee the financial sector, and an emphasis is placed on privacy-related aspects. In the UAE, the zones under the Dubai International Financial Centre (DIFC) and Abu Dhabi Global Market (ADGM) have not enforced AI regulations in the financial sector. Nevertheless, amendments have been made to the existing AI guidelines outside the financial free zones.

Hong Kong focuses on Fintech areas with compulsory vendor audits/KYC. The AI guidelines of the United Arab Emirates are sector-specific; no federal AI law exists, but agencies do the monitoring. The European Union uses AI for scoring, insurance, and investment. The Monetary Authority of Singapore (MAS) had developed the Smart Financial Centre. The centre permits experimentation with new technologies to support the latest FinTech developments. Canada applies Algorithmic Impact Assessment and stresses openness in making financial decisions. The Financial Conduct Authority (FCA) monitors the impact of AI on financial services. On the other hand, Japan encourages AI's role in financial services and sector-wise consultation. South Korea is nascent in its AI initiatives, and despite some guidelines, the AI regulations are not strictly applicable. The Reserve Bank of India (RBI) and the Securities and Exchange Board of India (SEBI) monitor AI activities.

III. DEVELOPMENT OF THE MODEL

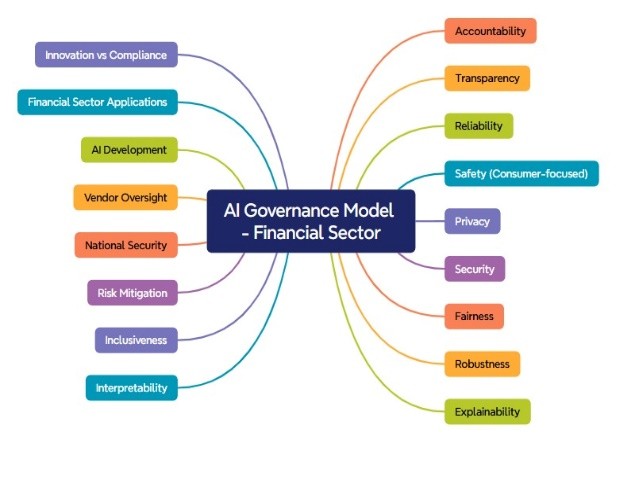

An elaborate, in-depth study demonstrates the salient dimensions. Accountability ensures clear ownership of AI actions for individuals and organizations involved; transparency promotes transparency in how AI systems operate, particularly regarding data and decision-making. Next, reliability states that AI must perform consistently and predictably under various real-world conditions; safety implies protecting users from financial or psychological harm from AI decisions. Based on China’s PIPL, the privacy aspect ensures that personal and sensitive data is protected at all stages, followed by the security aspects that ensure financial AI systems are protected from malicious attacks and unauthorized access. Then there is the dimension of fairness: AI should treat every user equally, avoiding favoritism, discrimination, or exclusion. Furthermore, we covered other dimensions, specifically robustness (withstanding errors, misuse, and unpredictable real-world scenarios), explainability (the ability to comprehend), and interpretability (enabling a deeper understanding of AI logic for regulators and developers); Inclusiveness: accessible and fair for all users, regardless of their background or ability; risk mitigation (focuses on identifying and addressing risks throughout the AI lifecycle; national security (Protects national interests and prevents foreign entities' misuse of AI in finance); Vendor Oversight (external AI tools or services that financial institutions introduce); AI Development (focuses on developing, training, and enhancing AI for financial applications); financial sector applications and innovation versus compliance.

The proposed framework based on the above insights is provided in “Fig. 1”.

FIGURE 1: THE AI GOVERNANCE MODEL

IV. CONCLUSION

In this working paper, we examined country-specific AI guidelines and ethical considerations, emphasizing the financial sector, and highlighted the implications and insights derived from the proposed model. The salient findings of our research demonstrate that AI guidelines and ethical considerations across the countries studied lack uniformity in the financial sector, and even sector-wise specifications. Secondly, if the AI guidelines are considered as a continuum, a country like China or the United States of America is higher in the continuum. In the mid-level, India and the UAE stand with emerging AI regulations. Finally, at the low stage of the scale, countries like South Korea are at early formulation stages of AI guidelines. Our study has some limitations. Only eleven countries were examined for their AI guidelines and ethical considerations. Future studies can cover more countries across the globe and develop cross-comparisons. Additionally, to address the gaps in the exploratory research, future studies can be qualitative studies (interviews with AI policy and decision-makers), and analyse the perception of AI-users (B2B and B2C) of the financial sectors through empirical research.

REFERENCES

[1] Daly, A., Hagendorff, T., Li, H., Mann, M., Marda, V., Wagner, B., Wang, W. W., & Witteborn, S. (2019, July 08). Artificial Intelligence, Governance and Ethics: Global Perspectives. The Chinese University of Hong Kong Faculty of Law Research Paper No. 2019-15, University of Hong Kong Faculty of Law Research Paper No. 2019/033. http://dx.doi.org/10.2139/ssrn.3414805

[2] Jelinek, T., Wallach, W. & Kerimi, D. (2021). Policy brief: the creation of a G20 coordinating committee for the governance of artificial intelligence. AI Ethics, 1, 141–150. https://doi.org/10.1007/s43681-020-00019-y

[3] Qian, Y., Siau, K.L., & Nah, F.F. (2024). Societal impacts of artificial intelligence: Ethical, legal, and governance issues. Societal Impacts, 3, 100040. https://doi.org/10.1016/j.socimp.2024.100040

[4] Roberts, H., Cowls, J., Morley, J., Taddeo, M., Wang, V., & Floridi, L. (2021). The Chinese approach to artificial intelligence: an analysis of policy, ethics, and regulation. AI & Society, 36(1), 59–77.

[5] Shin, D. (2020). User Perceptions of Algorithmic Decisions in the Personalized AI System: Perceptual Evaluation of Fairness, Accountability, Transparency, and Explainability. Journal of Broadcasting & Electronic Media, 64(4), 541–565. https://doi.org/10.1080/08838151.2020.1843357

[6] Batool, M., Sanumi, O., & Jankovic, J. (2024). Application of artificial intelligence in materials science, with a special focus on fuel cells and electrolyzers. Energy and AI, 18(1), 100424. DOI:10.1016/j.egyai.2024.100424

[7] Pillay, T. (2024, Sept 05). Ashwini Vaishnaw: Minister of Electronics and Information Technology, India. Time. https://time.com/7012817/ashwini-vaishnaw/

[8] White & Case (2024, May 13). AI Watch: Global regulatory tracker- India. Retrieved from https://www.whitecase.com/insight-our-thinking/ai-watch-global-regulatory-tracker-india

[9] White & Case (2025, March 31). AI Watch: Global regulatory tracker- China. Retrieved from https://www.whitecase.com/insight-our-thinking/ai-watch-global-regulatory-tracker-china

[10] UAE legislation (2024, 02 Sept). UAE’s International Stance on Artificial Intelligence Policy. Retrieved from https://uaelegislation.gov.ae/en/policy/details/uae-s-international-stance-on-artificial-intelligence-policy

[11] AI Governance (2025, Jan 06). Report on AI governance guidelines development. India AI. Retrieved from https://indiaai.gov.in/article/report-on-ai-governance-guidelines-development

[12] AI Act (n.d.). Shaping Europe’s digital future. European Commission. Retrieved from https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai

[13] Europarl (2023, June 08). EU AI Act: first regulation on artificial intelligence. European Parliament. https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence

[14] Hong Kong e-Legislation (n.d.). View Legislation. Retrieved from https://www.elegislation.gov.hk/hk/cap571

[15] Hong Kong Monetary Authority (2019, Nov 01). High-level Principles on Artificial Intelligence. Retrieved from https://brdr.hkma.gov.hk/eng/doc-ldg/docId/20191101-1-EN

[16] A pro-innovation approach to AI regulation (2023, March 29). Command Paper Number: 815. HH Associates Ltd, UK. ISBN 978-1-5286-4009-1

[17] Habuka, H. (2023, Feb 14). Japan’s approach to AI regulation and its impact on the 2023 G7 Presidency. Center for Strategic & International Studies. https://www.csis.org/analysis/japans-approach-ai-regulation-and-its-impact-2023-g7-presidency