This study aimed to produce a short paper submission on developing research in artificial intelligence (AI) governance, showcasing methodological choices and critically reflecting on initial insights. The stated work is an element of ongoing research at the proposal stage of a PhD program. The researcher employed AI in nuclear energy as a general area of inquiry, focusing specifically on the gap related to governance. A literature search utilised relevant keywords associated with AI governance in nuclear energy. A concise literature review was conducted, addressing methodological considerations. An interpretivist, qualitative approach was adopted, utilising simple random sampling and planned, semi-structured interviews that have not yet been conducted. Initial insights, which are preliminary and mostly framework-driven, indicate that, similar to the financial and health sectors, the nuclear industry requires its domain-specific AI implementation framework to govern itself.

Keywords: Artificial Intelligence implementation, Nuclear Applications, AI Governance, XAI, Black-box nature

I. INTRODUCTION

Artificial intelligence (AI) has been researched across various aspects, encompassing multiple industries and locations. In the nuclear industry, safety remains the highest priority. New technology implementations must be governed appropriately to mitigate risks and ensure safety and regulatory compliance. Current literature discusses AI implementations; however, an empirical gap exists within the nuclear sector, indicating that research must be conducted to address the governance of these implemented AI solutions. RE & Ermetov (2024) state that the early debates about AI were mainly theoretical, but that focus has shifted to the practical implications of AI. Furthermore, Lui (2024) argues that any attempt to regulate AI should not follow the norm but aim to regulate the underlying technology. This serves as my justification for a qualitative approach to bridge this gap.

A. Research Objectives

1. To critically review AI implementations in the nuclear sector.

2. To critically analyse the data from AI implementations in new nuclear plants to evaluate the governance applied.

3. To critically evaluate the findings as to how governance was achieved when implementing AI solutions in new Middle Eastern nuclear power plants.

4. To develop new academic insight into how AI implementations can be governed at nuclear plants.

B. Research Questions

1. Do nuclear plant employees perceive their experience in AI implementations to be aligned with a governance model or framework?

2. What process do new Middle Eastern nuclear power plants use to govern AI solutions?

II. LITERATURE REVIEW

This study utilises two theoretical models to explore AI governance during implementation at nuclear plants. The two models are the Technology Readiness and Acceptance Model (TRAM), as detailed by Lin, Shih, and Sher (2007), and the Standard Nuclear Performance Model (SNPM) adopted in the study by Lee, Kim, and Kim (2021).

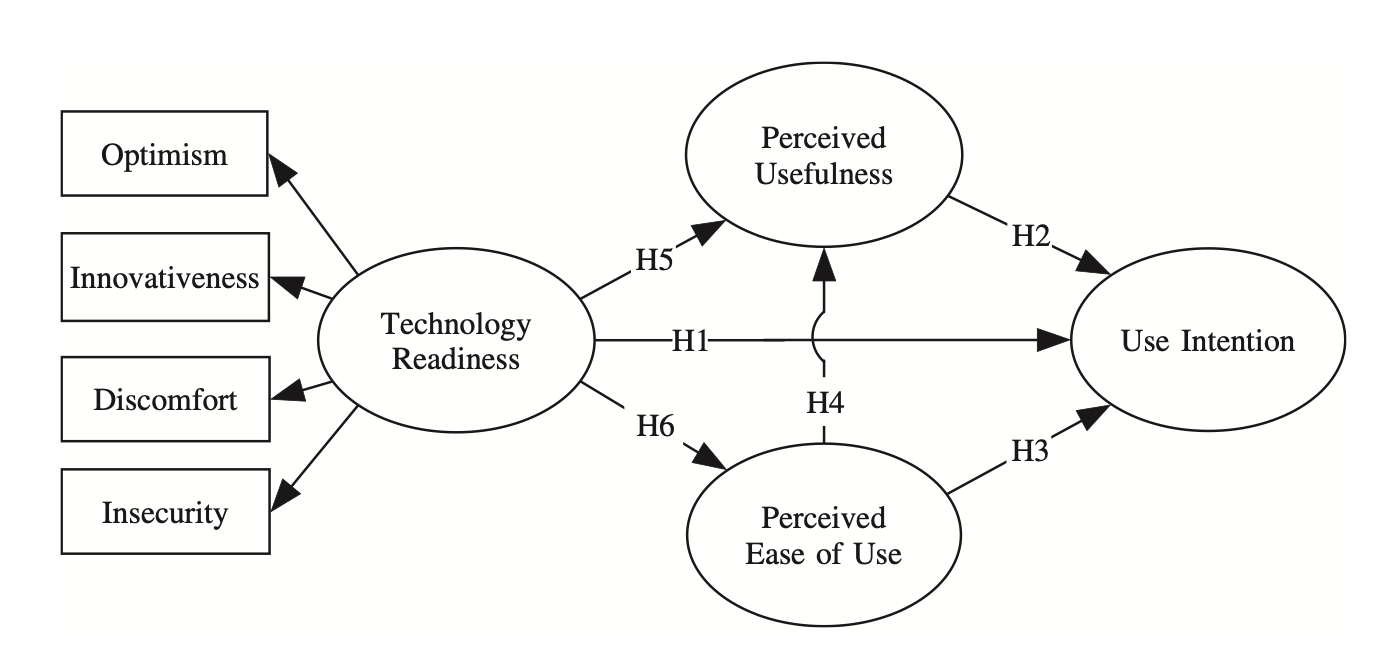

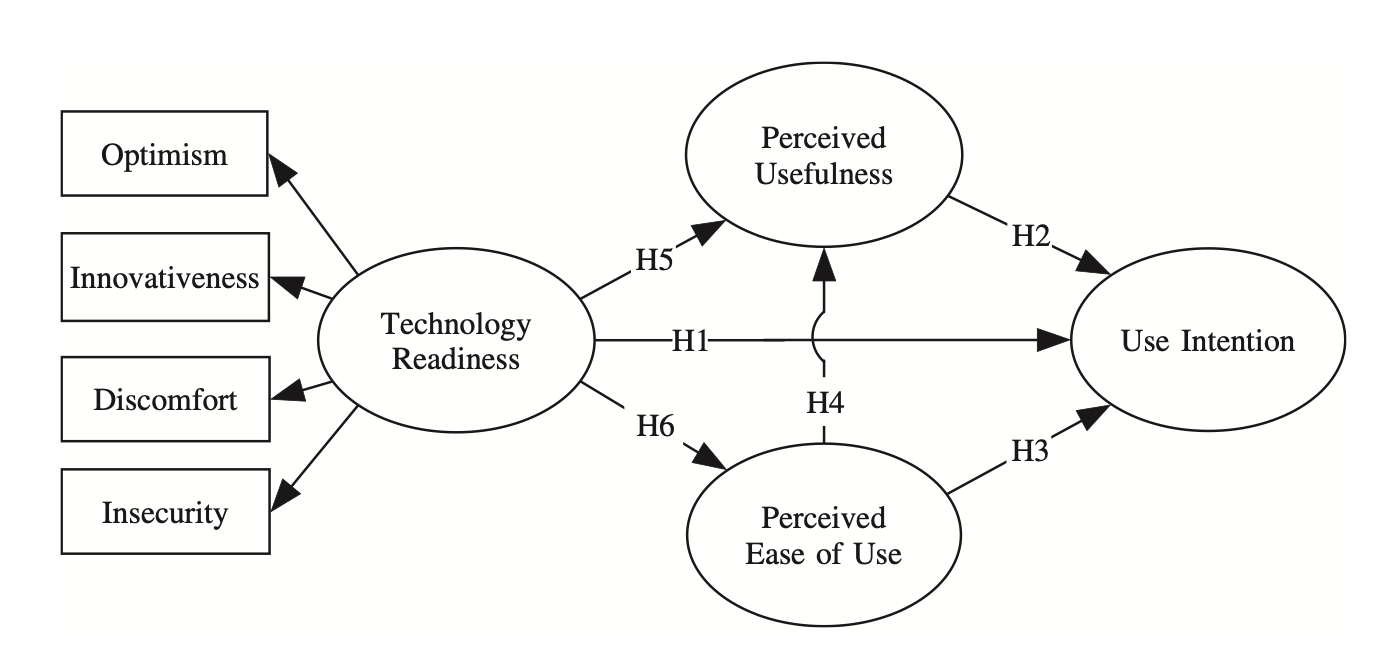

Fig. 1. The TRAM source; Lin, Shih & Sher (2007), p. 646

As shown in Figure 1, the TRAM combines Technology Readiness and Technology Acceptance Models with key influencing variables. This model addresses two ends of the spectrum related to technological advancement: digital maturity on the Technology Readiness side and User Acceptance on the Technology Acceptance side (Use Intention). These two elements of the TRAM are intertwined and, in the context of this study, highly relevant. Jou et al. (2009) speak to this in what they mention as the conservative implementation of AI. The gap in their approach is that it does not address the governance aspect of AI implementation.

Lin, Shih, and Sher (2007) provide evidence of TRAM in a marketing setting; however, decision-making is approached differently in a conservative, safety-conscious new nuclear plant. Motivators, drivers, and innovation direction are often based on organisational goals. Therefore, there is a greater need for a governance structure to oversee AI implementations at nuclear plants. The influence of TRAM can thus be tested by directing questions to interviewees regarding the factors that played a role in overall technology readiness and evaluating whether an assessment was conducted.

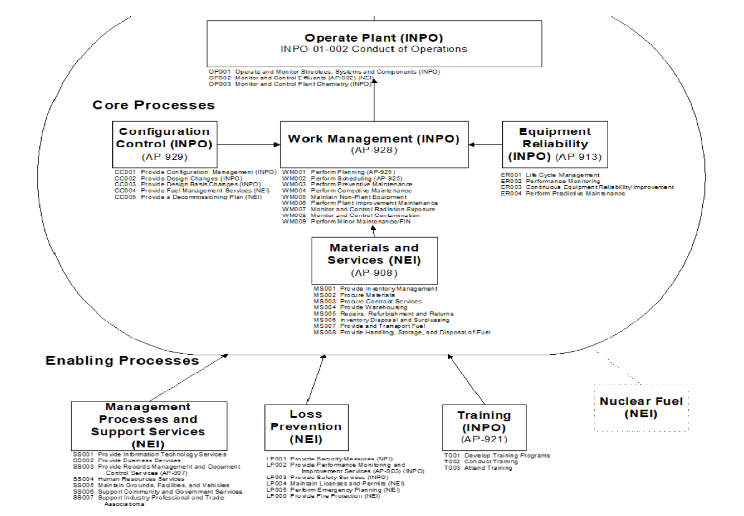

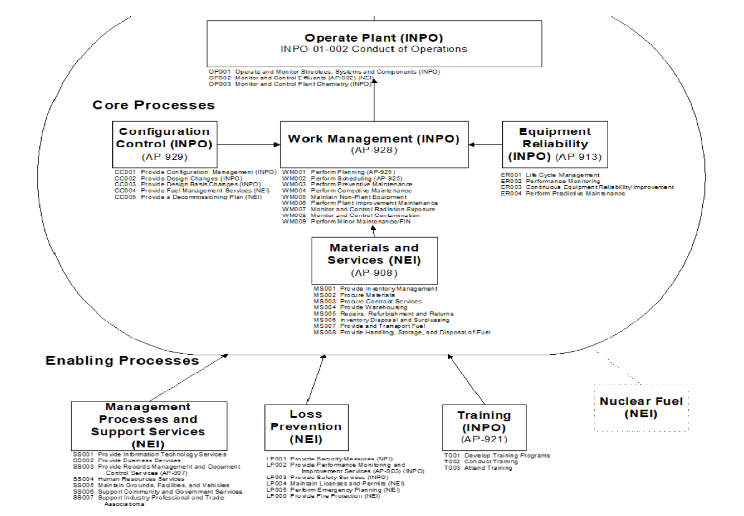

Fig. 2. The SNPM source: Lee, Kim & Kim (2021), p. 80

The SNPM shown in Figure 2 is a core nuclear performance model developed by the Nuclear Energy Institute. It provides a standard framework for nuclear plants to apply best practice standards in all core areas to maintain regulatory compliance and navigate international peer reviews conducted by the International Atomic Energy Agency (IAEA), the Institute of Nuclear Power Operations (INPO), and the World Association of Nuclear Operators (WANO). The SNPM establishes the Business Process Model for each functional control area (Business Functions like Operations, Maintenance, and Engineering) and manages electricity generation through this framework.

For example, Information Communication and Technology (ICT) and Operations Technology (OT) are part of the Support Services Process. Lee, Kim & Kim (2021) review this against an Engineering Model in their paper; however, the SNPM does not stand alone, and these processes are integrated. The Enterprise Resource Planning (ERP) tool is set up in most nuclear plants based on these processes. The Master Data used within the ERP also flows from one module to another. For instance, if planning is performed for a Preventative Maintenance Task, spares and labour are planned; however, everything costs money, so the Finance, Engineering, Procurement, Maintenance, and Work Management functions are all involved in one task. In our AI age, these integrated processes must be managed, governed, and regulated appropriately. To implement any AI use case, this master data will require sufficient quality, standards, and volume.

From a governance perspective, the SNPM provides an integrated view of how business processes are linked within a nuclear power plant. Any change to the software should therefore consider this integration. These integration points should be factored into implementing AI at new nuclear plants. Interview questions related to business process maturity and change frequency need evaluation. Similarly, there is a direct need to assess if Lee, Kim, and Kim's (2021) assumptions are still valid in governance regarding AI implementations.

The conceptual framework is based on Hart (2018), p. 184, and since this study, although related to a very technical field, represents a social phenomenon that will generate theory. Conceptually, developing a new theory involves utilising the literature review and the research questions to guide the creation of a new conceptual model. At a high level, the researcher believes that the SNPM influences the TRAM. This idea necessitates robust interview questions informed by the literature, and the qualitative approach will yield results.

Nuclear plants operate on the principle of conservative decision-making. This implies that all decisions prioritise safety, specifically nuclear safety, above all else. The SNPM provides a basis for these safety assumptions. Jou et al. (2009) state that automation design must be conservatively implemented for nuclear power plants to remain safely operational. This statement summarises the current AI debate surrounding the practical implications of AI implementations, as highlighted by RE & Ermetov (2024).

Another related topic is explainable AI, or XAI, which encompasses technological solutions designed for human oversight, monitoring, and management of the processes on which it is based. Agarwal et al. (2022) showed how this could be done by adopting XAI in nuclear. The nuclear industry is highly safe and ultra-conservative. Most rules, standards, and regulations have been in place for years, making this industry unique, even if others might view it as old and outdated. This outdated mentality is why the TRAM is applicable as a theoretical model.

With advancements in AI technology, the industry faces significant challenges, including AI literacy as covered by Long & Magerko (2020) and the development of core competencies. The SNPM provides a framework for nuclear training, which previously focused on plant-specific training rather than on new technological developments. There is a clear lack of training in technology-specific areas. While plant-specific training is well established, training related to technological advancements is insufficient. Several research areas have demonstrated the viability of applying AI in nuclear plants. In some cases, it has already been implemented, albeit without a governance framework, as Huang et al. (2023) show in their review.

Today's challenge is the lack of governance and regulatory frameworks for AI implementations in safety-critical components or systems at nuclear plants. Suman (2021) confirms that AI implementations were not carried out within this industry due to regulatory concerns. Supporting this, Cancila et al. (2024), working with their French Regulator, show that they applied experience from non-nuclear AI implementations to nuclear AI implementations. Contradicting this is the study by Lu et al. (2020), which demonstrates how AI applications were introduced in various aspects of nuclear plants. Another perspective is provided by Reuel & Undheim (2024), who state that rapid AI development challenges policymaking and governance in the context of Generative AI. The literature indicates that nuclear power plants lack academic guidance on governance and regulatory frameworks for the safe and successful implementation of AI in this safety-critical environment.

Taeihagh (2021) argues that we will reap the benefits of AI once we understand and mitigate its associated risks. Butcher & Beridze (2019) echo this sentiment and clearly define governance as the mechanisms and processes that guide AI, utilising regulation as a legal framework. The legal basis for AI is covered by Yordanova (2022) in the EU AI Act. The legal aspects are further addressed by Kurshan, Shen & Chen (2020) through their framework for self-regulation. Solaiman, Bashir & Dieng (2024) conducted a similar study but developed a framework for the health industry. Although focused on the financial and health industries, we know that the regulations imposed on both sectors are as stringent as those enforced by nuclear regulators. This implies that domain-specific regulation is required. Furthermore, self-regulation is evident in this approach. Anderljung et al. (2023) support the issue of self-regulation and draw attention to regulatory challenges based on AI implementations.

AI implementations at nuclear plants should be governed to ensure the safety of the plants, people, and the public. Jendoubi & Asad (2024) highlight the need for AI integration to enhance safety in nuclear power plants. They propose a system that utilises operational data to improve incident response; however, the study fails to address the governance requirements. Further evidence of the necessity to enhance the safety of nuclear plants with AI implementations is provided by Sethu et al. (2023), which discusses using AI to mitigate human errors. Human Error Prevention is a continuous improvement aspect in a nuclear plant’s road to excellence. Contradicting this, Hall et al. (2024) used Human-Centred AI as a theoretical framework to show how Machine Learning could introduce automation at nuclear plants. Cancila et al. (2024) explain how AI is implemented in nuclear plants while acknowledging existing gaps. This introduces risk.

Organisational culture, roles, and responsibilities are crucial for AI implementations. Most industries have adopted the Chief AI Officer role. Abonamah & Abdelhamid (2024) speak to this statement in their paper, highlighting the importance of senior leaders’ roles. Often, these positions are strategic and aligned to ensure success. Typically, this is expected from AI Steering Committees and other focused governance meetings. One of the objectives of this study is to evaluate the governance employed during AI implementations, and a significant aspect of governance is related to the organisational framework. The roles and responsibilities may be variables that either mitigate or contribute to risk. The study will need to explore these aspects in practice.

Nuclear plants demonstrate trust by explaining daily operations in simple terms. Suman (2021) illustrates how nuclear plants face challenges today and how AI in nuclear applications can address some of these issues—the focus on decision-making centres around AI's black-box nature. Furthermore, nuclear plants are licensed based on their ability to mitigate risk. This risk mitigation requires complete transparency with the most significant stakeholder, the public. This builds trust, and when applied to AI implementations, the nuclear industry should adopt XAI as its foundation in the context of governance, risk, and compliance, supported by Huang et al. (2023), who consider XAI technology that enhances transparency. Additionally, Sethu et al. (2023) studied the role of AI in risk mitigation within the Operations and Maintenance functions at a nuclear plant.

If,

Governance + Risk = Compliance

Then,

SNPM + TRAM = AI Governance Implementation

The general assumption is that if we consider the SNPM an extension of a governance framework, detailing core processes, and incorporate the TRAM, along with its variables and intended purpose for risk mitigation, we could achieve a basic level of compliance. This needs testing.

III. METHODOLOGY

Interpretivism was chosen to study the phenomenon of AI governance during its implementation at nuclear plants. This decision was based on the Heightening Awareness of Research Philosophy (HARP) test by Saunders, Lewis & Thornhill (2019). Furthermore, the choice of interpretivism aimed to create meaning from the perceptions of AI governance during implementations at operational nuclear plants in the Middle East. The research strategy guiding this study is the Case Study method with a cross-sectional time horizon. Data collection will be conducted through semi-structured interviews. The sampling strategy used was simple random sampling to ensure fairness, and Inductive Thematic Analysis will be employed to carry out the data analysis.

The methodological choice accompanying this is qualitative research, as guided by Maxwell (2013) p227, who views previous studies as guideposts to the design of future research. This is evidenced by Nyathi’s (2023) and Abdulhussein’s (2024) previous studies, which employed qualitative research. The research aims to uncover hidden governance elements present during AI implementations, examine the processes used, and investigate why a governance framework remains absent in this safety-critical industry. An inductive approach will help formulate a roadmap or pattern that can be used to build a theory. Interpretivism has a disadvantage in that it is not generalisable and lacks the rigour of repeatability and validity. To overcome this, a second set of interviews could be conducted at another newly built nuclear plant within the exact geographic location in the Middle East to confirm whether the results are similar. However, this is not possible and presents a limitation to the study due to access constraints.

Inductive Thematic Analysis will be used to analyse the transcripts from these interviews. The data will first be coded and then processed as themes develop. As per Saldaña (2021), coding assigns an attribute to a word or a short phrase. The primary reason for this approach is its alignment with the philosophy and its simplicity and flexibility, even though it may take significantly longer to process than other choices. The weakness of this method is the potential for inconsistencies among the datasets, which will require more rigour. The advantage of inductive coding is that it does not limit the researcher to a narrow, focused codebook or predetermined codes (a priori codes).

IV. INSIGHTS AND IMPLICATIONS

The United Arab Emirates (UAE) does not have an explicit AI Act but does possess a well-defined AI strategy and an integrated AI policy. Recently, more countries have joined the EU in developing specific AI legislation. This does not imply that the absence of an AI Act indicates a lack of governance; rather, it highlights a governance gap in building a framework. Yordanova (2022) detailed the EU AI Act in two significant parts: risk identification and mitigation for AI implementations. These represent gaps within the existing AI implementations in the nuclear industry, indicating a need for deeper insights. In exploring the literature, there are well-documented AI use cases for nuclear energy, each associated with a certain level of risk regarding implementation. Classifying these use cases by risk significance is a beneficial practice that can be derived from the EU AI Act. This classification will ensure that AI implementation is governed from a risk perspective, fostering transparency and trust with the local regulator and the public. Supplementing the risk classification with the business processes of the SNPM and the existing safety committees at operating nuclear plants, while addressing the perceived gaps from the TRAM that remain unknown due to planned interviews, enables the documentation of a governance framework for AI implementation within new nuclear plants. Suman (2021), Agarwal et al. (2022) and Huang et al. (2023) all covered XAI and the Black-box nature of AI within nuclear plants. Suman (2021) and Agarwal et al. (2022) had similar research gaps that covered the need for more operational data and reliability and interpretability concerns related to the black-box nature of AI. These insights can potentially affect the trust relationship between the nuclear operator and the regulator. Nuclear Operating Licenses are all risk-based, and the ability to mitigate risk requires an explanation.

V. CONCLUSION

This paper identifies a gap in the governance of AI implementations within the nuclear industry, specifically focusing on a new build plant in the Middle East. The work discussed is part of ongoing research at the proposal stage of a PhD program. AI implementations must be governed appropriately through risk mitigation, improved safety, and ensured regulatory compliance. They cannot proceed in the nuclear industry without suitable governance. The EU’s legal basis for AI implementation is the EU AI Act. This study is developing research; consequently, the research questions cannot be answered and remain an open concern. Since this work is ongoing, interviews are planned but have not yet been conducted, and the insights within this paper should be considered preliminary and largely framework-driven. The key focus is thus to create awareness in the nuclear industry of the perceived current practice of AI implementations without proper governance. If AI implementations in the nuclear industry continue without a governance framework, we introduce risks into our plants, which may result in non-compliance.

Thanks and appreciation go out to the giant upon whose shoulders I could stand—the giant who allowed me to learn and grow through this process. I would also like to thank my family for their patience, love, and support on this new journey.

REFERENCES

[1] RE, Y.R.Y. and Ermetov, E., 2024. ETHICAL CONSIDERATIONS IN THE DEVELOPMENT AND DEPLOYMENT OF AI. Innovations in Science and Technologies, 1(5), pp.26-42.

[2] Liu, H.Y., 2024. Why is AI regulation so difficult? https://www.researchgate.net/profile/Hin-Yan-Liu/publication/377577308_Why_is_AI_regulation_so_difficult/links/65ae508a9ce29c458b91dcc1/Why-is-AI-regulation-so-difficult.pdf

[3] Saunders, M., Lewis, P. and Thornhill, A., 2019. Research methods for business students. 8th Ed. Pearson education.

[4] Maxwell, J.A., 2013. Qualitative research design: An interactive approach: An interactive approach. SAGE.

[5] Nyathi, W.G., 2023. The role of artificial intelligence in improving public policymaking and implementation in South Africa (Doctoral dissertation, University of Johannesburg).

[6] Abdulhussein, M., 2024. The Impact of Artificial Intelligence and Machine Learning on Organizations Cybersecurity. Liberty University.

[7] Saldaña, J., 2021. The coding manual for qualitative researchers.

[8] Lin, C.H., Shih, H.Y. and Sher, P.J., 2007. Integrating technology readiness into technology acceptance: The TRAM model. Psychology & Marketing, 24(7), pp.641-657.

[9] Lee, S.D., Kim, J.W. and Kim, M.S., 2021. Development of Electronic Management System for improving the utilization of Engineering Model in Domestic Nuclear Power Plant. Journal of the Korean Society of Safety, 36(5), pp.79-85.

[10] Hart, C., 2018. Doing a literature review: Releasing the research imagination.

[11] Jou, Y.T., Yenn, T.C., Lin, C.J., Yang, C.W. and Chiang, C.C., 2009. Evaluation of operators’ mental workload of human–system interface automation in the advanced nuclear power plants. Nuclear Engineering and Design, 239(11), pp.2537-2542.

[12] Agarwal, V., Walker, C. M., Manjunatha, K. A., Mortenson, T. J., Lybeck, N. J., Hall, A. C., ... & Gribok, A. V. (2022). Technical Basis for Advanced Artificial Intelligence and Machine Learning Adoption in Nuclear Power Plants (No. INL/RPT-22-68942-Rev000). Idaho National Laboratory (INL), Idaho Falls, ID (United States).

[13] Long, D., & Magerko, B. (2020, April). What is AI literacy? Competencies and design considerations. In Proceedings of the 2020 CHI conference on human factors in computing systems (pp. 1-16).

[14] Huang, Q., Peng, S., Deng, J., Zeng, H., Zhang, Z., Liu, Y., & Yuan, P. (2023). A review of the application of artificial intelligence to nuclear reactors: Where we are and what's next. Heliyon, 9(3).

[15] Suman, S. (2021). Artificial intelligence in nuclear industry: Chimera or solution?. Journal of Cleaner Production, 278, 124022.

[16] Cancila, D., Daniel, G., Sirven, J. B., Chihani, Z., Chersi, F., & Vinciguerra, R. (2024). Research Directions on AI and Nuclear. In EPJ Web of Conferences (Vol. 302, p. 17005). EDP Sciences.

[17] Lu, C., Lyu, J., Zhang, L., Gong, A., Fan, Y., Yan, J., & Li, X. (2020). Nuclear power plants with artificial intelligence in industry 4.0 era: Top-level design and current applications—A systemic review. IEEE Access, 8, 194315-194332.

[18] Reuel, A., & Undheim, T. A. (2024). Generative AI needs adaptive governance. arXiv preprint arXiv:2406.04554.

[19] Taeihagh, A. (2021). Governance of artificial intelligence. Policy and society, 40(2), 137-157.

[20] Butcher, J., & Beridze, I. (2019). What is the state of artificial intelligence governance globally? The RUSI Journal, 164(5-6), 88-96.

[21] Yordanova, K. (2022). The EU AI Act-Balancing human rights and innovation through regulatory sandboxes and standardization.

[22] Kurshan, E., Shen, H., & Chen, J. (2020, October). Towards self-regulating AI: Challenges and opportunities of AI model governance in financial services. In Proceedings of the First ACM International Conference on AI in Finance (pp. 1-8).

[23] Solaiman, B., Bashir, A., & Dieng, F. (2024). Regulating AI in health in the Middle East: case studies from Qatar, Saudi Arabia and the United Arab Emirates. In Research handbook on health, AI and the law (pp. 332-354). Edward Elgar Publishing.

[24] Anderljung, M., Barnhart, J., Korinek, A., Leung, J., O'Keefe, C., Whittlestone, J., ... & Wolf, K. (2023). Frontier AI regulation: Managing emerging risks to public safety. arXiv preprint arXiv:2307.03718.

[25] Jendoubi, C., & Asad, A. (2024). A Survey of Artificial Intelligence Applications in Nuclear Power Plants. IoT, 5(4), 666-691.

[26] Sethu, M., Kotla, B., Russell, D., Madadi, M., Titu, N. A., Coble, J. B., ... & Khojandi, A. (2023). Application of artificial intelligence in detection and mitigation of human factor errors in nuclear power plants: a review. Nuclear Technology, 209(3), 276-294.

[27] Hall, A., Murray, P., Boring, R. L., & Agarwal, V. (2024). Human-centered and explainable artificial intelligence in nuclear operations. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting (Vol. 68, No. 1, pp. 1563-1568). Sage CA: Los Angeles, CA: SAGE Publications.

[28] Cancila, D., Daniel, G., Sirven, J. B., Chihani, Z., Chersi, F., & Vinciguerra, R. (2024). Research Directions on AI and Nuclear. In EPJ Web of Conferences (Vol. 302, p. 17005). EDP Sciences.

[29] Abonamah, A. A., & Abdelhamid, N. (2024). Managerial insights for AI/ML implementation: a playbook for successful organizational integration. Discover Artificial Intelligence, 4(1), 22.